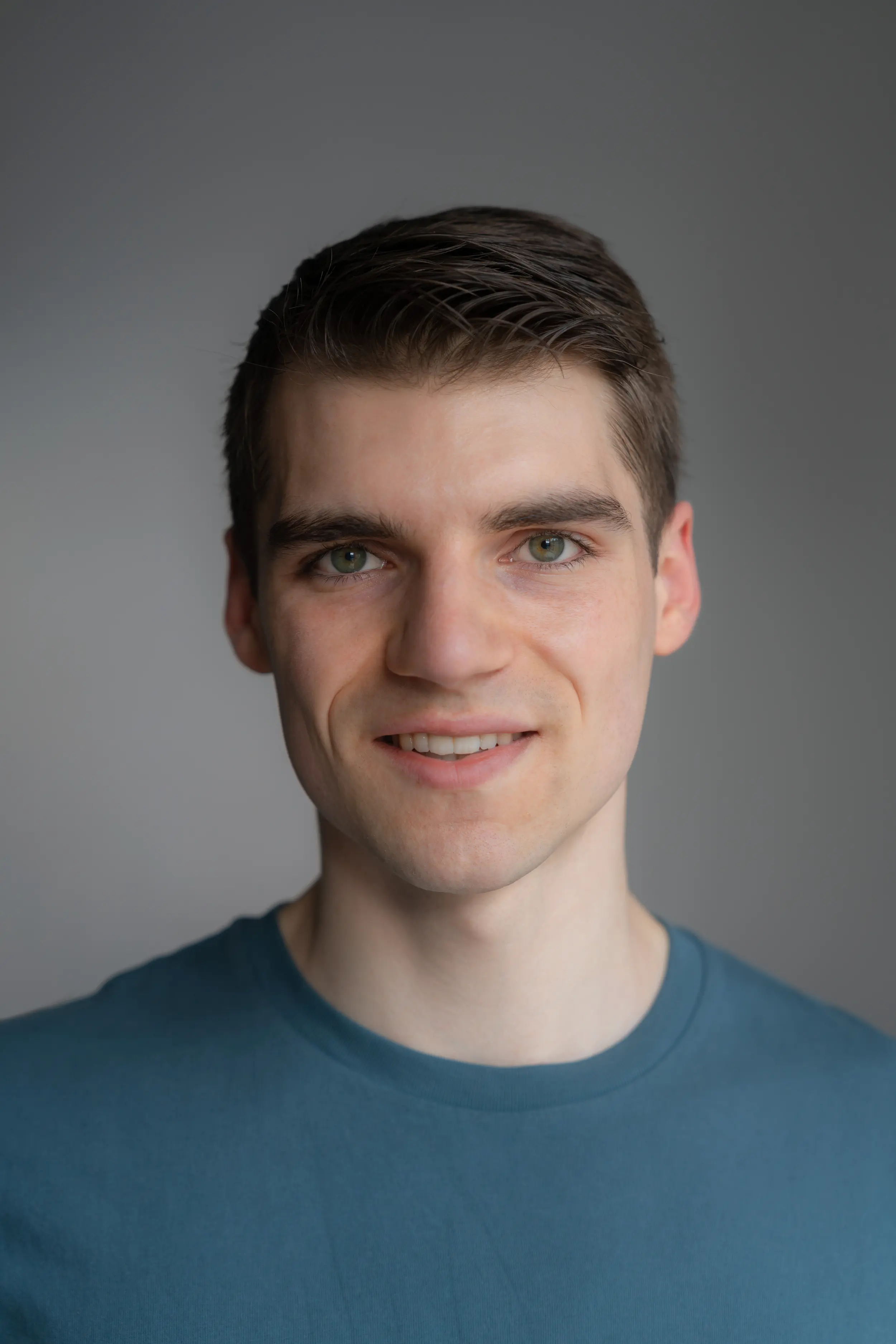

Hi, I'm Maxime. I'm a PhD candidate in machine learning, and founding engineer at iGent AI.

I work on building long-running autonomous software engineering and research agents. This spans LLM post-training, coding eval generation for frontier models, and automated agent harness optimisation.

Some topics I'm interested in include:

- Using probabilistic machine learning to guide decision making under uncertainty, optimising resource use in autonomous agents,

- deep learning theory and understanding training dynamics and architectural choices,

- open-endedness and self-improving AI systems,

- using LLMs to make 'judgemental forecasts' or answer questions with uncertainty; so as to leverage large pre-trained models and unstructured data as a supplement to traditional numerical data in forecasting or decison making

Blog Articles

I write articles related to things I'm working on. Here are the latest ones:

-

Weight-Init Conditioned Bayesian Neural Network Priors

An alternative Bayesian neural network prior, that we might believe a little more - but that sadly doesn’t work very well.

-

Bayesian Low-Rank Adaptation for Large Language Models

An overview of some recent work, published in ICLR 2024, where we estimate the uncertainty and marginal likelihoods in LLMs using Bayesian LoRA adapters. We focus on the fine-tuning setting, and scale our method to LLMs using a Laplace approximation with low-rank K-FAC.

-

Second-Order Methods in Machine Learning

A motivation of the Hessian from an optimisation perspective (and the related Generalised Gauss-Newton / Fisher Information Matrix), an introduction to Kronecker-factored approximate curvature, and applications of the curvature in machine learning.

Papers

Some papers I have written or co-authored.

-

Improving LLM-Generated Code Quality with GRPO

Maxime Robeyns, Laurence Aitchison

2025 – RLC workshop

https://arxiv.org/abs/2506.02211

-

A Self-Improving Coding Agent

Maxime Robeyns, Martin Szummer, Laurence Aitchison

2025 – ICLR SSM-FM, Oral

https://arxiv.org/abs/2504.15228

-

Bayesian Reward Models For LLM Alignment

Adam X. Yang, Maxime Robeyns, Thomas Coste, Jun Wang, Haitham Bou-Ammar, Laurence Aitchison

2024 – ICLR SeT-LLM

https://arxiv.org/abs/2402.13210

-

Bayesian Low-rank Adaptation for Large Language Models

Adam X. Yang, Maxime Robeyns, Xi Wang, Laurence Aitchison

2024 – ICLR

https://arxiv.org/abs/2308.13111

-

Taylor TD-learning

Michele Garibbo, Maxime Robeyns, Laurence Aitchison

2023 – NeurIPS

https://arxiv.org/abs/2302.14182

-

A Theory of Representation Learning in Deep Neural Networks Gives a Deep Generalisation of Kernel Methods

Adam X. Yang, Maxime Robeyns, Edward Milsom, Nandi Schoots, Laurence Aitchison

2023 – ICML

https://proceedings.mlr.press/v202/yang23k/yang23k.pdf

-

Fast Estimation of Physical Galaxy Properties using Simulation-Based Inference

Maxime Robeyns, Sotiria Fotopolou, Mike Walmsley, Laurence Aitchison

2022 – ICML 2022 Workshop on Machine Learning for Astrophysics

https://ml4astro.github.io/icml2022/assets/12.pdf

Fragments

Any other drivel and shower thoughts end up in my "fragments". Here are the last ones: